Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, automatically generate references for free.

- Knowledge Base

- Methodology

- How to Write a Strong Hypothesis | Guide & Examples

How to Write a Strong Hypothesis | Guide & Examples

Published on 6 May 2022 by Shona McCombes .

A hypothesis is a statement that can be tested by scientific research. If you want to test a relationship between two or more variables, you need to write hypotheses before you start your experiment or data collection.

Table of contents

What is a hypothesis, developing a hypothesis (with example), hypothesis examples, frequently asked questions about writing hypotheses.

A hypothesis states your predictions about what your research will find. It is a tentative answer to your research question that has not yet been tested. For some research projects, you might have to write several hypotheses that address different aspects of your research question.

A hypothesis is not just a guess – it should be based on existing theories and knowledge. It also has to be testable, which means you can support or refute it through scientific research methods (such as experiments, observations, and statistical analysis of data).

Variables in hypotheses

Hypotheses propose a relationship between two or more variables . An independent variable is something the researcher changes or controls. A dependent variable is something the researcher observes and measures.

In this example, the independent variable is exposure to the sun – the assumed cause . The dependent variable is the level of happiness – the assumed effect .

Prevent plagiarism, run a free check.

Step 1: ask a question.

Writing a hypothesis begins with a research question that you want to answer. The question should be focused, specific, and researchable within the constraints of your project.

Step 2: Do some preliminary research

Your initial answer to the question should be based on what is already known about the topic. Look for theories and previous studies to help you form educated assumptions about what your research will find.

At this stage, you might construct a conceptual framework to identify which variables you will study and what you think the relationships are between them. Sometimes, you’ll have to operationalise more complex constructs.

Step 3: Formulate your hypothesis

Now you should have some idea of what you expect to find. Write your initial answer to the question in a clear, concise sentence.

Step 4: Refine your hypothesis

You need to make sure your hypothesis is specific and testable. There are various ways of phrasing a hypothesis, but all the terms you use should have clear definitions, and the hypothesis should contain:

- The relevant variables

- The specific group being studied

- The predicted outcome of the experiment or analysis

Step 5: Phrase your hypothesis in three ways

To identify the variables, you can write a simple prediction in if … then form. The first part of the sentence states the independent variable and the second part states the dependent variable.

In academic research, hypotheses are more commonly phrased in terms of correlations or effects, where you directly state the predicted relationship between variables.

If you are comparing two groups, the hypothesis can state what difference you expect to find between them.

Step 6. Write a null hypothesis

If your research involves statistical hypothesis testing , you will also have to write a null hypothesis. The null hypothesis is the default position that there is no association between the variables. The null hypothesis is written as H 0 , while the alternative hypothesis is H 1 or H a .

Hypothesis testing is a formal procedure for investigating our ideas about the world using statistics. It is used by scientists to test specific predictions, called hypotheses , by calculating how likely it is that a pattern or relationship between variables could have arisen by chance.

A hypothesis is not just a guess. It should be based on existing theories and knowledge. It also has to be testable, which means you can support or refute it through scientific research methods (such as experiments, observations, and statistical analysis of data).

A research hypothesis is your proposed answer to your research question. The research hypothesis usually includes an explanation (‘ x affects y because …’).

A statistical hypothesis, on the other hand, is a mathematical statement about a population parameter. Statistical hypotheses always come in pairs: the null and alternative hypotheses. In a well-designed study , the statistical hypotheses correspond logically to the research hypothesis.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the ‘Cite this Scribbr article’ button to automatically add the citation to our free Reference Generator.

McCombes, S. (2022, May 06). How to Write a Strong Hypothesis | Guide & Examples. Scribbr. Retrieved 11 November 2024, from https://www.scribbr.co.uk/research-methods/hypothesis-writing/

Is this article helpful?

Shona McCombes

Other students also liked, operationalisation | a guide with examples, pros & cons, what is a conceptual framework | tips & examples, a quick guide to experimental design | 5 steps & examples.

- Affiliate Program

- UNITED STATES

- 台灣 (TAIWAN)

- TÜRKIYE (TURKEY)

- Academic Editing Services

- - Research Paper

- - Journal Manuscript

- - Dissertation

- - College & University Assignments

- Admissions Editing Services

- - Application Essay

- - Personal Statement

- - Recommendation Letter

- - Cover Letter

- - CV/Resume

- Business Editing Services

- - Business Documents

- - Report & Brochure

- - Website & Blog

- Writer Editing Services

- - Script & Screenplay

- Our Editors

- Client Reviews

- Editing & Proofreading Prices

- Wordvice Points

- Partner Discount

- Plagiarism Checker

- APA Citation Generator

- MLA Citation Generator

- Chicago Citation Generator

- Vancouver Citation Generator

- - APA Style

- - MLA Style

- - Chicago Style

- - Vancouver Style

- Writing & Editing Guide

- Academic Resources

- Admissions Resources

How to Write a Research Hypothesis: Good & Bad Examples

What is a research hypothesis?

A research hypothesis is an attempt at explaining a phenomenon or the relationships between phenomena/variables in the real world. Hypotheses are sometimes called “educated guesses”, but they are in fact (or let’s say they should be) based on previous observations, existing theories, scientific evidence, and logic. A research hypothesis is also not a prediction—rather, predictions are ( should be) based on clearly formulated hypotheses. For example, “We tested the hypothesis that KLF2 knockout mice would show deficiencies in heart development” is an assumption or prediction, not a hypothesis.

The research hypothesis at the basis of this prediction is “the product of the KLF2 gene is involved in the development of the cardiovascular system in mice”—and this hypothesis is probably (hopefully) based on a clear observation, such as that mice with low levels of Kruppel-like factor 2 (which KLF2 codes for) seem to have heart problems. From this hypothesis, you can derive the idea that a mouse in which this particular gene does not function cannot develop a normal cardiovascular system, and then make the prediction that we started with.

What is the difference between a hypothesis and a prediction?

You might think that these are very subtle differences, and you will certainly come across many publications that do not contain an actual hypothesis or do not make these distinctions correctly. But considering that the formulation and testing of hypotheses is an integral part of the scientific method, it is good to be aware of the concepts underlying this approach. The two hallmarks of a scientific hypothesis are falsifiability (an evaluation standard that was introduced by the philosopher of science Karl Popper in 1934) and testability —if you cannot use experiments or data to decide whether an idea is true or false, then it is not a hypothesis (or at least a very bad one).

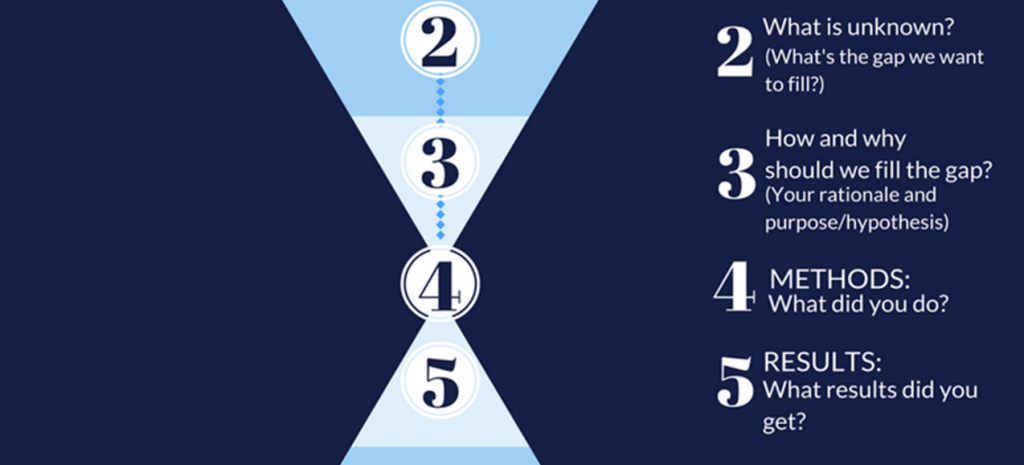

So, in a nutshell, you (1) look at existing evidence/theories, (2) come up with a hypothesis, (3) make a prediction that allows you to (4) design an experiment or data analysis to test it, and (5) come to a conclusion. Of course, not all studies have hypotheses (there is also exploratory or hypothesis-generating research), and you do not necessarily have to state your hypothesis as such in your paper.

But for the sake of understanding the principles of the scientific method, let’s first take a closer look at the different types of hypotheses that research articles refer to and then give you a step-by-step guide for how to formulate a strong hypothesis for your own paper.

Types of Research Hypotheses

Hypotheses can be simple , which means they describe the relationship between one single independent variable (the one you observe variations in or plan to manipulate) and one single dependent variable (the one you expect to be affected by the variations/manipulation). If there are more variables on either side, you are dealing with a complex hypothesis. You can also distinguish hypotheses according to the kind of relationship between the variables you are interested in (e.g., causal or associative ). But apart from these variations, we are usually interested in what is called the “alternative hypothesis” and, in contrast to that, the “null hypothesis”. If you think these two should be listed the other way round, then you are right, logically speaking—the alternative should surely come second. However, since this is the hypothesis we (as researchers) are usually interested in, let’s start from there.

Alternative Hypothesis

If you predict a relationship between two variables in your study, then the research hypothesis that you formulate to describe that relationship is your alternative hypothesis (usually H1 in statistical terms). The goal of your hypothesis testing is thus to demonstrate that there is sufficient evidence that supports the alternative hypothesis, rather than evidence for the possibility that there is no such relationship. The alternative hypothesis is usually the research hypothesis of a study and is based on the literature, previous observations, and widely known theories.

Null Hypothesis

The hypothesis that describes the other possible outcome, that is, that your variables are not related, is the null hypothesis ( H0 ). Based on your findings, you choose between the two hypotheses—usually that means that if your prediction was correct, you reject the null hypothesis and accept the alternative. Make sure, however, that you are not getting lost at this step of the thinking process: If your prediction is that there will be no difference or change, then you are trying to find support for the null hypothesis and reject H1.

Directional Hypothesis

While the null hypothesis is obviously “static”, the alternative hypothesis can specify a direction for the observed relationship between variables—for example, that mice with higher expression levels of a certain protein are more active than those with lower levels. This is then called a one-tailed hypothesis.

Another example for a directional one-tailed alternative hypothesis would be that

H1: Attending private classes before important exams has a positive effect on performance.

Your null hypothesis would then be that

H0: Attending private classes before important exams has no/a negative effect on performance.

Nondirectional Hypothesis

A nondirectional hypothesis does not specify the direction of the potentially observed effect, only that there is a relationship between the studied variables—this is called a two-tailed hypothesis. For instance, if you are studying a new drug that has shown some effects on pathways involved in a certain condition (e.g., anxiety) in vitro in the lab, but you can’t say for sure whether it will have the same effects in an animal model or maybe induce other/side effects that you can’t predict and potentially increase anxiety levels instead, you could state the two hypotheses like this:

H1: The only lab-tested drug (somehow) affects anxiety levels in an anxiety mouse model.

You then test this nondirectional alternative hypothesis against the null hypothesis:

H0: The only lab-tested drug has no effect on anxiety levels in an anxiety mouse model.

How to Write a Hypothesis for a Research Paper

Now that we understand the important distinctions between different kinds of research hypotheses, let’s look at a simple process of how to write a hypothesis.

Writing a Hypothesis Step:1

Ask a question, based on earlier research. Research always starts with a question, but one that takes into account what is already known about a topic or phenomenon. For example, if you are interested in whether people who have pets are happier than those who don’t, do a literature search and find out what has already been demonstrated. You will probably realize that yes, there is quite a bit of research that shows a relationship between happiness and owning a pet—and even studies that show that owning a dog is more beneficial than owning a cat ! Let’s say you are so intrigued by this finding that you wonder:

What is it that makes dog owners even happier than cat owners?

Let’s move on to Step 2 and find an answer to that question.

Writing a Hypothesis Step 2:

Formulate a strong hypothesis by answering your own question. Again, you don’t want to make things up, take unicorns into account, or repeat/ignore what has already been done. Looking at the dog-vs-cat papers your literature search returned, you see that most studies are based on self-report questionnaires on personality traits, mental health, and life satisfaction. What you don’t find is any data on actual (mental or physical) health measures, and no experiments. You therefore decide to make a bold claim come up with the carefully thought-through hypothesis that it’s maybe the lifestyle of the dog owners, which includes walking their dog several times per day, engaging in fun and healthy activities such as agility competitions, and taking them on trips, that gives them that extra boost in happiness. You could therefore answer your question in the following way:

Dog owners are happier than cat owners because of the dog-related activities they engage in.

Now you have to verify that your hypothesis fulfills the two requirements we introduced at the beginning of this resource article: falsifiability and testability . If it can’t be wrong and can’t be tested, it’s not a hypothesis. We are lucky, however, because yes, we can test whether owning a dog but not engaging in any of those activities leads to lower levels of happiness or well-being than owning a dog and playing and running around with them or taking them on trips.

Writing a Hypothesis Step 3:

Make your predictions and define your variables. We have verified that we can test our hypothesis, but now we have to define all the relevant variables, design our experiment or data analysis, and make precise predictions. You could, for example, decide to study dog owners (not surprising at this point), let them fill in questionnaires about their lifestyle as well as their life satisfaction (as other studies did), and then compare two groups of active and inactive dog owners. Alternatively, if you want to go beyond the data that earlier studies produced and analyzed and directly manipulate the activity level of your dog owners to study the effect of that manipulation, you could invite them to your lab, select groups of participants with similar lifestyles, make them change their lifestyle (e.g., couch potato dog owners start agility classes, very active ones have to refrain from any fun activities for a certain period of time) and assess their happiness levels before and after the intervention. In both cases, your independent variable would be “ level of engagement in fun activities with dog” and your dependent variable would be happiness or well-being .

Examples of a Good and Bad Hypothesis

Let’s look at a few examples of good and bad hypotheses to get you started.

Good Hypothesis Examples

Bad hypothesis examples, tips for writing a research hypothesis.

If you understood the distinction between a hypothesis and a prediction we made at the beginning of this article, then you will have no problem formulating your hypotheses and predictions correctly. To refresh your memory: We have to (1) look at existing evidence, (2) come up with a hypothesis, (3) make a prediction, and (4) design an experiment. For example, you could summarize your dog/happiness study like this:

(1) While research suggests that dog owners are happier than cat owners, there are no reports on what factors drive this difference. (2) We hypothesized that it is the fun activities that many dog owners (but very few cat owners) engage in with their pets that increases their happiness levels. (3) We thus predicted that preventing very active dog owners from engaging in such activities for some time and making very inactive dog owners take up such activities would lead to an increase and decrease in their overall self-ratings of happiness, respectively. (4) To test this, we invited dog owners into our lab, assessed their mental and emotional well-being through questionnaires, and then assigned them to an “active” and an “inactive” group, depending on…

Note that you use “we hypothesize” only for your hypothesis, not for your experimental prediction, and “would” or “if – then” only for your prediction, not your hypothesis. A hypothesis that states that something “would” affect something else sounds as if you don’t have enough confidence to make a clear statement—in which case you can’t expect your readers to believe in your research either. Write in the present tense, don’t use modal verbs that express varying degrees of certainty (such as may, might, or could ), and remember that you are not drawing a conclusion while trying not to exaggerate but making a clear statement that you then, in a way, try to disprove . And if that happens, that is not something to fear but an important part of the scientific process.

Similarly, don’t use “we hypothesize” when you explain the implications of your research or make predictions in the conclusion section of your manuscript, since these are clearly not hypotheses in the true sense of the word. As we said earlier, you will find that many authors of academic articles do not seem to care too much about these rather subtle distinctions, but thinking very clearly about your own research will not only help you write better but also ensure that even that infamous Reviewer 2 will find fewer reasons to nitpick about your manuscript.

Perfect Your Manuscript With Professional Editing

Now that you know how to write a strong research hypothesis for your research paper, you might be interested in our free AI Proofreader , Wordvice AI, which finds and fixes errors in grammar, punctuation, and word choice in academic texts. Or if you are interested in human proofreading , check out our English editing services , including research paper editing and manuscript editing .

On the Wordvice academic resources website , you can also find many more articles and other resources that can help you with writing the other parts of your research paper , with making a research paper outline before you put everything together, or with writing an effective cover letter once you are ready to submit.

IMAGES

VIDEO